Cost-Efficient E-Commerce Architecture: Doing More with a Fixed Budget

Scale e-commerce while cutting cloud OPEX by 20-30% and saving €40k+ annually. Learn to transform infrastructure into a margin-protecting growth engine by prioritizing optimization over hardware under a fixed budget.

E-commerce teams can grow order volume and still lose the CFO’s confidence. The reason is familiar: costs rise quietly in the background. Cloud bills increase, paid intermediaries keep charging, and non-production environments consume more spend than anyone expects. Over time, the online channel starts to look less like a business line and more like an expense that keeps drifting upward.

That is why ecommerce cost optimization keeps coming up in executive conversations. Leaders want growth, but they also want predictability. They want to reduce cloud OPEX without slowing delivery or taking on extra risk.

The Client faced this exact tension. The budget was locked. The online channel was expected to scale across markets and handle peaks, yet adding servers was not a safe plan. Cost savings had to come from better engineering choices, clearer ownership, and fewer recurring fees. FlexMade approached cost the same way we approach performance: find the bottleneck, remove waste, and measure the result.

As a result of our collaboration, infrastructure OPEX dropped by roughly 20–30% while the platform supported far higher volume. Intermediary fees worth €40–45k per year were removed by bringing key flows into the core platform.

This article explains the patterns behind those savings. It also shows a practical way to estimate impact before changes go live, so CFOs and CTOs can align on ROI.

The Starting Point: Rising Costs Under Growth Pressure

Before ecommerce cost optimization became a defined priority, infrastructure spending followed traffic growth almost automatically. As order volume increased, new instances were provisioned. When queries slowed down, compute was upgraded. When integrations failed, additional services were layered on top. Each action solved an immediate issue, yet the total cloud footprint kept expanding.

At that stage, the online channel still represented a small share of total revenue, yet its operational profile was becoming heavier. Infrastructure OPEX hovered around €6,900–7,000 per month. Some of that spend was necessary, while some of it reflected habits formed during earlier phases of growth.

Database Load Without Targeted Optimization

Query performance issues were often handled through vertical scaling. Larger instances temporarily improved response time, yet the underlying schema and index structure remained unchanged. That approach translated directly into higher monthly cost.

A focused database indexing ecommerce review had not yet taken place. Missing or suboptimal indexes forced the system to process more data than required. Instead of refining schema and query paths, the platform absorbed the inefficiency through larger compute capacity. This pattern made it harder to reduce cloud OPEX later because the baseline had already expanded.

Limited Caching Coverage

The caching strategy ecommerce configuration covered only selected endpoints. Repeated reads and predictable traffic patterns still reached origin services more often than necessary. Under normal load this was manageable, but under peak load it triggered autoscaling events and higher infrastructure spend.

Without systematic TTL tuning and hot-path analysis, the platform paid for compute cycles that could have been avoided. Cache gaps translated directly into higher OPEX.

Overprovisioned Non-Production Environments

Dev and Stage environments mirrored production too closely in resource allocation. Their workloads did not justify identical capacity, yet infrastructure right sizing had not been applied outside production.

As a result, fixed monthly cost accumulated in environments that were used intermittently. These environments contributed steadily to the total cloud invoice.

Paid Intermediaries in Core Workflows

Parts of invoicing and synchronization logic relied on paid proxies. These services handled important flows, yet they introduced annual fees and additional latency. Their cost scaled with usage, which reduced transparency around true cost per order.

This structure also complicated FinOps for ecommerce efforts. When third-party layers sit between core systems, attributing cost becomes less precise. That lack of visibility limits the ability to model cost optimization scenarios accurately.

Individually, each of these factors looked manageable. Together, they created a system in which spending expanded alongside demand. Leadership recognized that continuing in this direction would erode margin over time. The budget constraint forced a different question: how to support growth while actively reducing cloud OPEX.

Budget Is a Coach

Fixed budgets change how teams think about ecommerce cost optimization. A variable budget makes it easy to treat cloud spend as a safety net. A locked budget forces prioritization, because every extra server competes with product work, testing capacity, and operational stability.

Overspending often hides the issues. Extra servers can mask inefficient queries, missing indexes, and poorly scoped caching. Costs still rise, but the root cause stays in place. The platform becomes more expensive to run, and performance gains stop being proportional to spend. A cost-efficient architecture does the opposite. It makes improvements in response time and stability visible in the monthly bill.

For the Client, the budget was locked early. The platform still had to grow, support peak periods, and run reliably across markets. This forced a different operating model: decisions were evaluated through performance per euro. If an improvement did not lower cost, reduce risk, or remove recurring fees, it rarely survived prioritization.

FlexMade used the budget limit as a decision filter. If a performance issue appeared, the first question was about root cause. A slow checkout path triggered analysis of queries, indexes, and caching coverage. A traffic spike triggered review of hot paths, rate limits, and CDN configuration. A recurring incident triggered work on queues, retries, and observability. Spending increased only when the underlying work was already done and the remaining gap was capacity.

That approach delivered a consistent result. Infrastructure OPEX dropped by roughly 20–30% while order volume grew. Development and staging environments were moved to more affordable hosting, saving €400–500 per month without touching production. Recurring proxy fees worth €40–45k per year were removed by bringing key workflows into the platform. Each improvement supported the same goal: cost optimization that keeps scale viable while protecting ROI.

Decision Matrix: Optimize vs. Scale Out

Every growing commerce platform eventually faces the same decision. A performance issue appears, traffic increases, or a peak event exposes latency. The immediate reaction is often to add servers. That reaction feels safe because it produces visible capacity. However, scaling out without analysis increases long-term cloud commitments and makes it harder to reduce cloud OPEX later.

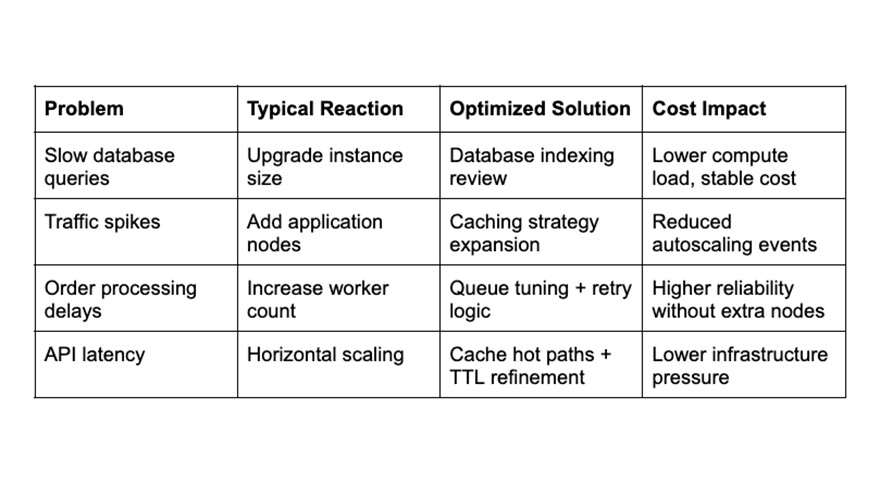

Ecommerce cost optimization requires a different decision model. Before capacity increases, the team evaluates whether the issue can be solved through optimization. This approach became formalized in the program as a recurring question: optimize first, scale second.

Below is the simplified decision matrix used to guide engineering choices.

Slow Queries: Index Before Upgrade

When checkout or catalog queries slowed down, the first step was a database indexing ecommerce audit. Missing composite indexes and unoptimized joins were identified and corrected. Query plans improved, and CPU utilization dropped.

Without this step, the platform would have required larger database instances. That increase would have raised baseline monthly spend. Instead, optimization stabilized performance while preserving existing capacity.

Traffic Peaks: Cache Before Adding Nodes

Traffic spikes during campaigns created a temporary load. Earlier responses would have increased the number of application nodes. Under the new decision model, the team reviewed the caching strategy coverage first.

Hot paths, such as product detail reads and pricing endpoints, were analyzed. Expanding cache coverage and tuning TTL values reduced origin calls. Autoscaling stabilized, and infrastructure right-sizing became realistic instead of reactive.

Processing Delays: Refine Queues Before Scaling Workers

Order workflows sometimes experienced delays under burst load. Adding more workers was possible, yet it would have increased compute cost permanently. Instead, queue configuration and retry logic were refined. Observability improvements helped identify bottlenecks.

This adjustment increased throughput within the same resource envelope and supported FinOps for ecommerce transparency by linking system load directly to measurable events.

API Latency: Reduce Calls Before Expanding Infrastructure

Some latency originated from repeated internal service calls. Instead of horizontal scaling, caching was extended to those internal responses. This change reduced total request volume across services and lowered total compute pressure.

The decision matrix created a repeatable habit. Every issue triggered an evaluation of optimization options before new infrastructure was approved. That discipline contributed directly to the 20–30% drop in infrastructure OPEX while order volume expanded significantly.

Mini Calculator: How to Estimate Savings Before and After Optimization

Ecommerce cost optimization becomes easier to justify when the impact can be modeled before changes are implemented. CFOs and CTOs need a way to estimate how specific improvements will reduce cloud OPEX and affect margin.

Below is a practical framework that can be used to evaluate savings potential.

Step 1: Establish the Baseline

Start with the current monthly infrastructure OPEX. In the Client’s case, this figure ranged between €6,900 and €7,000 per month before targeted optimization work began.

Next, calculate the cost per order. Divide total cloud OPEX by average monthly orders. This metric creates visibility into performance per euro and ties technical cost directly to commercial output.

For example:

If infrastructure OPEX = €7,000

If monthly orders = 7,000

Cost per order = €1 in cloud spend

This number becomes the reference point.

Step 2: Identify Optimization Levers

Each optimization lever should be modeled separately. Common levers include:

- database indexing ecommerce improvements that reduce CPU load

- caching strategy ecommerce expansion that lowers origin calls

- infrastructure right sizing that reduces baseline instance cost

- removal of paid intermediaries with fixed annual fees

Each lever should be translated into estimated percentage impact. For example, a database optimization that reduces CPU utilization by 20% may postpone an instance upgrade worth €800 per month.

Step 3: Model Two Scenarios

Create two projections for the same traffic forecast.

Scenario A: Scale out

In this scenario, higher traffic leads to larger instances or additional nodes. Cloud OPEX increases proportionally.

Scenario B: Optimize first

In this scenario, indexing, caching, and right sizing absorb part of the traffic growth. Additional capacity is added only if required after optimization.

Compare projected monthly OPEX under both scenarios. The difference represents potential savings.

Step 4: Translate Monthly Savings Into Annual Impact

If infrastructure OPEX drops by 25%, the effect compounds across the year.

Example:

Baseline = €7,000 per month

25% reduction = €1,750 saved per month

Annual saving ≈ €21,000

If €40–45k per year in proxy fees are removed in parallel, total annual impact exceeds €60,000. This combined result reflects structured ecommerce cost optimization rather than incremental cost trimming.

Step 5: Recalculate Cost Per Order After Optimization

If order volume increases while infrastructure cost decreases, cost per order also shrinks.

Example:

New OPEX = €5,500 per month

Monthly orders = 15,000

Cost per order ≈ €0.37

The calculator shows that reducing cloud OPEX is measurable before deployment if engineering changes are tied to resource consumption and order volume.

Quote-Ready Principles

The following principles summarize the structural lessons behind this ecommerce cost optimization program. Each statement reflects decisions that contributed to lower infrastructure OPEX while supporting higher transaction volume.

1. Cloud invoices reflect architectural decisions.

2. Adding servers without analysis increases long-term OPEX.

3. Database indexing ecommerce work often delivers more value than instance upgrades.

4. A well-designed caching strategy ecommerce setup reduces autoscaling pressure.

5. Infrastructure right sizing improves margin immediately.

6. Non-production environments deserve the same cost scrutiny as production.

7. Paid intermediaries compound cost as volume grows.

8. Performance per euro is a practical executive metric.

9. Budget constraints facilitate innovation when teams apply them consistently.

10. Ecommerce cost optimization supports growth when it focuses on structural efficiency.

These principles are reusable across retail platforms. They do not depend on specific tooling or vendor selection.

Anti-Patterns: Cost Mistakes That Erode Margin

Cost pressure often reveals recurring mistakes in ecommerce programs. The following patterns frequently block effective ecommerce cost optimization.

1. Treating Cloud Spend as Fixed Overhead

When infrastructure cost is accepted as unavoidable, optimization work rarely receives priority. Ecommerce cost optimization requires active ownership of usage patterns and architectural efficiency.

2. Scaling Before Reviewing Root Cause

Increasing instance size may relieve pressure temporarily. It does not address inefficient queries, missing indexes, or weak caching coverage. This habit makes it harder to reduce cloud OPEX later.

3. Ignoring Non-Production Infrastructure

Dev and Stage environments often mirror production resources without equivalent workload. Lack of infrastructure right sizing in these environments inflates total spend.

4. Accepting Paid Proxies as Permanent

Third-party intermediaries can appear convenient during early growth. As volume increases, their recurring fees erode margin and reduce control.

5. No Cost Attribution Per Service

Without visibility into which service consumes which resource, FinOps for ecommerce becomes reactive. Cost per order and service-level reporting help connect engineering work to financial outcomes.

6. Confusing Traffic Growth With Cost Inevitability

Higher traffic does not automatically require proportional cost increases. Database indexing improvements and a stronger caching strategy ecommerce design can absorb growth within the same capacity envelope.

7. Delaying Optimization Until After Peak Season

Teams often postpone improvements until after critical events. However, proactive ecommerce cost optimization reduces risk during peaks and protects margin before demand surges.

Avoiding these anti-patterns supports a cost-efficient architecture that scales sustainably within financial constraints.

Conclusion: Cost Discipline as a Growth Enabler

Ecommerce cost optimization often begins as a cost-control initiative. In this case, it became a growth lever. The Client reduced cloud OPEX by approximately 20–30% while supporting significantly higher order volume and multi-country complexity. Annual intermediary fees of €40–45k were eliminated. These outcomes were achieved within a fixed budget and without compromising stability.

A fixed budget changed how decisions were made. Instead of approving larger instances by default, the team reviewed database indexing gaps, expanded the caching strategy ecommerce coverage, and applied infrastructure right sizing across services. Each intervention increased throughput within the same resource limits. Performance per euro became a working metric.

For executive teams, the takeaway connects engineering discipline with financial control. Cloud invoices should be analyzed at the level of queries, cache hit ratios, and service utilization. Cost per order should be visible in reporting. Paid intermediaries should be reviewed for long-term margin impact. These practices help reduce cloud OPEX while supporting scale.

This case shows that ecommerce cost optimization does not slow growth but supports it. When cost visibility improves and optimization becomes routine, scale becomes predictable. The result is a platform that supports revenue expansion without allowing infrastructure OPEX to erode margin.

Contact us

- Phone Number: +1 (425) 247-0867

- Email Address: [email protected]